First Generation Computers | Vibepedia

First generation computers represent the dawn of electronic digital computing, characterized by their immense size, reliance on vacuum tubes for circuitry…

Contents

- 🎵 Origins & History

- ⚙️ How It Works

- 📊 Key Facts & Numbers

- 👥 Key People & Organizations

- 🌍 Cultural Impact & Influence

- ⚡ Current State & Latest Developments

- 🤔 Controversies & Debates

- 🔮 Future Outlook & Predictions

- 💡 Practical Applications

- 📚 Related Topics & Deeper Reading

- Frequently Asked Questions

- References

- Related Topics

Overview

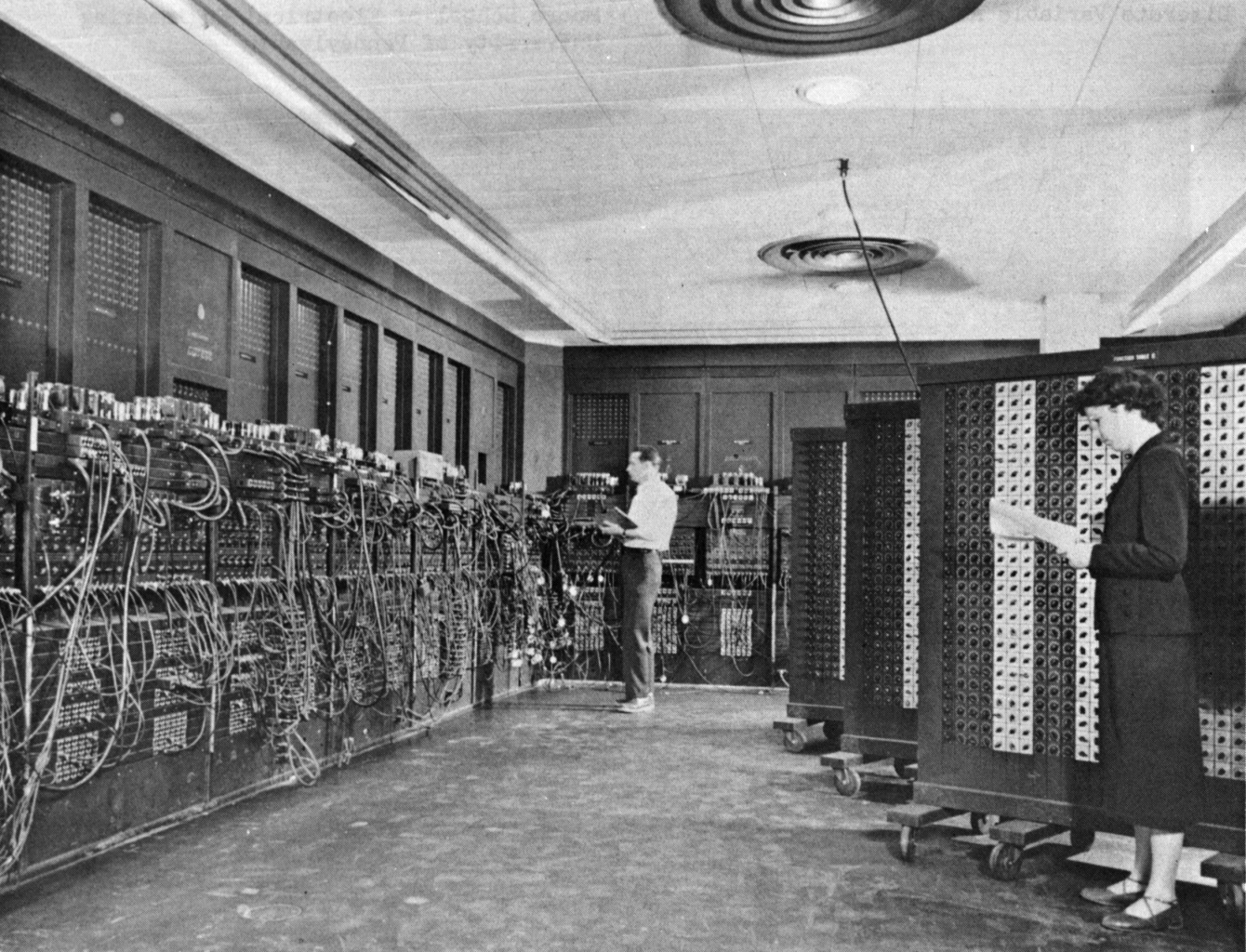

The genesis of first generation computers can be traced to wartime necessity and the ambitious visions of scientists and engineers. While early mechanical calculators like Charles Babbage's Analytical Engine hinted at programmable computation, it was the advent of reliable vacuum tubes that made electronic digital computers feasible. The University of Pennsylvania's Moore School of Electrical Engineering was a crucible for innovation, leading to the development of the ENIAC (Electronic Numerical Integrator and Computer), completed in 1945. This behemoth, initially conceived for military ballistics calculations, demonstrated the power of electronic switching. Shortly after, John von Neumann's conceptualization of stored-program architecture, as detailed in his "First Draft of a Report on the EDVAC," revolutionized computer design, moving away from hardwired instructions. The UNIVAC I (Universal Automatic Computer I), delivered to the U.S. Census Bureau in 1951, became the first commercially successful electronic digital computer, marking the transition from experimental machines to practical, albeit still massive, business tools.

⚙️ How It Works

At their core, first generation computers operated using vacuum tubes as their primary switching and amplification components. Thousands, sometimes tens of thousands, of these glass tubes formed the logic gates and memory elements. Data was typically input via punched cards or paper tape, and output was often printed or displayed on rudimentary screens. Memory was a critical bottleneck; early systems utilized mercury delay lines or magnetic drums, which were slow and volatile. Programming was a painstaking, low-level process, often requiring direct manipulation of switches and patch cords to define the machine's operations. Machine language, the most basic form of programming, was the norm, making software development incredibly complex and error-prone. The sheer physical scale and power consumption meant these machines generated substantial heat, necessitating elaborate cooling systems, often involving industrial fans and air conditioning units.

📊 Key Facts & Numbers

The scale of first generation computers was staggering. The ENIAC, for instance, contained approximately 17,468 vacuum tubes, weighed about 30 tons, and occupied 1,800 square feet of floor space. It consumed around 150 kilowatts of power, enough to light up a small town. Early magnetic drums could store a mere 1,000 to 4,000 words of data, a minuscule amount compared to modern storage capacities. The IBM 701, introduced in 1952, cost approximately $1.5 million to $2 million (equivalent to over $15 million today) and was leased at rates of $15,000 to $20,000 per month. Programming the ENIAC for a new problem could take weeks, a stark contrast to the near-instantaneous compilation times of modern software. The failure rate of vacuum tubes was also a significant issue; it's estimated that ENIAC experienced a tube failure every few days, requiring constant maintenance by a team of technicians.

👥 Key People & Organizations

Several key individuals and organizations were instrumental in the development of first generation computers. John Mauchly and J. Presper Eckert were the chief architects of the ENIAC at the University of Pennsylvania. John von Neumann's theoretical contributions, particularly his work on stored-program architecture, were foundational. The U.S. Army was a crucial early funder and user, commissioning machines for ballistics and code-breaking. IBM, initially hesitant, eventually entered the market with the IBM 701 and later the more successful IBM 650, becoming a dominant force in the nascent computer industry. The RAND Corporation also played a role in theoretical computer science and the development of early computing concepts.

🌍 Cultural Impact & Influence

The advent of first generation computers fundamentally altered the trajectory of science, engineering, and business. They enabled complex scientific simulations and calculations that were previously impossible, accelerating fields like physics, meteorology, and cryptography. For the first time, large-scale data processing became a reality, with machines like the UNIVAC I being used for census tabulation and business forecasting. This era also saw the birth of the computer programmer as a profession, albeit one dealing with highly technical, machine-level instructions. The sheer novelty and power of these machines captured the public imagination, fueling both awe and apprehension about the future of automation, as depicted in early science fiction and news reports. The cultural impact was profound, planting the seeds for the information age.

⚡ Current State & Latest Developments

While first generation computers are long obsolete, their legacy is omnipresent in the digital devices we use today. The fundamental principles of binary logic, stored programs, and electronic switching, pioneered by these early machines, remain the bedrock of modern computing. The concepts of input, processing, output, and storage are direct descendants of their architecture. The challenges faced in terms of size, power consumption, and reliability spurred the research that eventually led to the transistor and integrated circuit, ushering in subsequent generations of computers. The very idea of a general-purpose electronic computer, capable of executing diverse tasks, was firmly established by these pioneering systems.

🤔 Controversies & Debates

One of the most significant debates surrounding first generation computers centers on the precise definition of 'computer' and who deserves credit for its invention. While ENIAC is often cited, some historians point to earlier, less publicized machines like the Colossus computer used by British codebreakers during World War II, whose existence was kept secret for decades, raising questions about the narrative of public development. Another point of contention is the efficiency and practicality of programming. While revolutionary for their time, the manual rewiring and switch-setting methods were incredibly cumbersome, leading to debates about whether these machines truly represented 'programmable' computing in a modern sense. The immense cost and specialized nature of these machines also fueled discussions about accessibility and the potential for a widening technological divide.

🔮 Future Outlook & Predictions

The trajectory from first generation computers to today's devices is a story of relentless miniaturization and exponential performance gains. The future envisioned by the pioneers of the 1940s and 1950s, while perhaps not foreseeing smartphones or the internet, certainly anticipated increasingly powerful and accessible computing. The lessons learned from the limitations of vacuum tubes directly informed the development of transistor-based second-generation machines, which were smaller, faster, and more reliable. This evolutionary path, driven by Moore's Law and continuous innovation in microelectronics, suggests that future computing will likely be even more integrated into our lives, potentially through advancements in quantum computing, neuromorphic architectures, and ubiquitous embedded systems. The core quest for faster, smaller, and more efficient computation continues.

💡 Practical Applications

The practical applications of first generation computers were primarily in scientific research and military operations. They were used for complex mathematical calculations such as trajectory analysis for artillery shells, nuclear physics simulations, and code-breaking during World War II. The UNIVAC I was one of the first to be applied to business problems, including actuarial calculations for insurance companies and inventory management. These machines were also crucial in early weather forecasting models, allowing meteorologists to process vast amounts of atmospheric data. While not used for everyday tasks, their ability to handle large datasets and perform complex computations laid the groundwork for the data-driven decision-making that characterizes modern industries.

Key Facts

- Year

- 1945-1955

- Origin

- United States

- Category

- technology

- Type

- technology

Frequently Asked Questions

What made first generation computers so different from modern ones?

First generation computers were vastly different due to their reliance on thousands of vacuum tubes for processing and memory, making them enormous, power-hungry, and prone to frequent breakdowns. Unlike today's sleek devices, these machines occupied entire rooms, required dedicated cooling systems, and were programmed using machine language via switches and patch panels. Their storage capacity was measured in thousands of words, a stark contrast to the terabytes common today. The sheer scale and manual operation made them impractical for widespread use, primarily limiting them to specialized scientific and military applications.

What were the most famous examples of first generation computers?

The most iconic examples include the ENIAC (Electronic Numerical Integrator and Computer), often cited as the first general-purpose electronic digital computer, completed in 1945. Another landmark was the UNIVAC I (Universal Automatic Computer I), the first commercially produced computer, delivered to the U.S. Census Bureau in 1951. Other notable machines include the EDVAC (Electronic Discrete Variable Automatic Computer), which implemented John von Neumann's stored-program concept, and IBM's IBM 701, which marked IBM's entry into the large-scale computer market.

How much did first generation computers cost and how were they programmed?

First generation computers were astronomically expensive, with machines like the UNIVAC I costing around $1 million (over $10 million today) and the IBM 701 leasing for $15,000-$20,000 per month. Programming was done at the lowest level, using machine language directly. This involved manually setting switches on control panels or physically rewiring circuits using patch cords to define the sequence of operations. This process was incredibly time-consuming and error-prone, with a single programming task for machines like ENIAC potentially taking weeks to complete. There were no high-level programming languages or operating systems as we understand them today.

What were the primary challenges faced by first generation computers?

The primary challenges were immense: reliability, heat generation, power consumption, and programming complexity. Vacuum tubes had a high failure rate, meaning machines like ENIAC would experience tube failures every few days, requiring constant maintenance. The sheer number of tubes generated significant heat, necessitating powerful, noisy cooling systems. Their power draw was enormous, often exceeding 100 kilowatts. Furthermore, programming was a highly specialized and laborious task, requiring deep understanding of the machine's architecture and manual configuration, making software development a major bottleneck.

Did first generation computers have any impact on later computer generations?

Absolutely. First generation computers were the crucial proof-of-concept that electronic digital computation was viable. They established fundamental principles like the stored-program architecture, pioneered by John von Neumann, which is still in use today. The limitations of vacuum tubes directly motivated the search for more reliable and efficient components, leading to the invention of the transistor and the subsequent second generation of computers. The early applications in science and military also demonstrated the immense potential of computing, driving further investment and research that shaped the entire field of computer science.

Where can I see a first generation computer today?

Several museums around the world house preserved examples of first generation computers. The ENIAC is on display at the United States Army Ordnance Museum in Aberdeen, Maryland, though it is not operational. The Computer History Museum in Mountain View, California, has numerous artifacts from this era, including components and related machines. Other science and technology museums may also feature exhibits on early computing history, offering a glimpse into these monumental machines.

What was the role of the military in the development of first generation computers?

The military played a pivotal role, both as a funder and a primary user. The ENIAC was developed under contract with the U.S. Army's Ballistic Research Laboratory to calculate artillery firing tables. The need for rapid and complex calculations for code-breaking during World War II also spurred significant investment and innovation, though much of this work, like the Colossus machines in Britain, was kept secret for decades. The military's demand for computational power was a major driving force behind the engineering breakthroughs that characterized this era.