Deep Learning Techniques | Vibepedia

Deep learning techniques represent a powerful subset of machine learning, characterized by the use of artificial neural networks with multiple layers (hence…

Contents

Overview

The conceptual seeds of deep learning were sown in the mid-20th century with early work on artificial neurons. However, limitations in computational power and data availability, coupled with theoretical hurdles like the vanishing gradient problem, relegated these ideas to the academic fringe for decades. A significant resurgence began with Yann LeCun's development of Convolutional Neural Networks (CNNs) for character recognition, and further propelled by Geoffrey Hinton's work on Deep Belief Networks (DBNs). The true explosion, however, occurred when Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton's AlexNet dramatically outperformed all competitors in the ImageNet competition, showcasing the power of deep CNNs trained on massive datasets using GPUs.

⚙️ How It Works

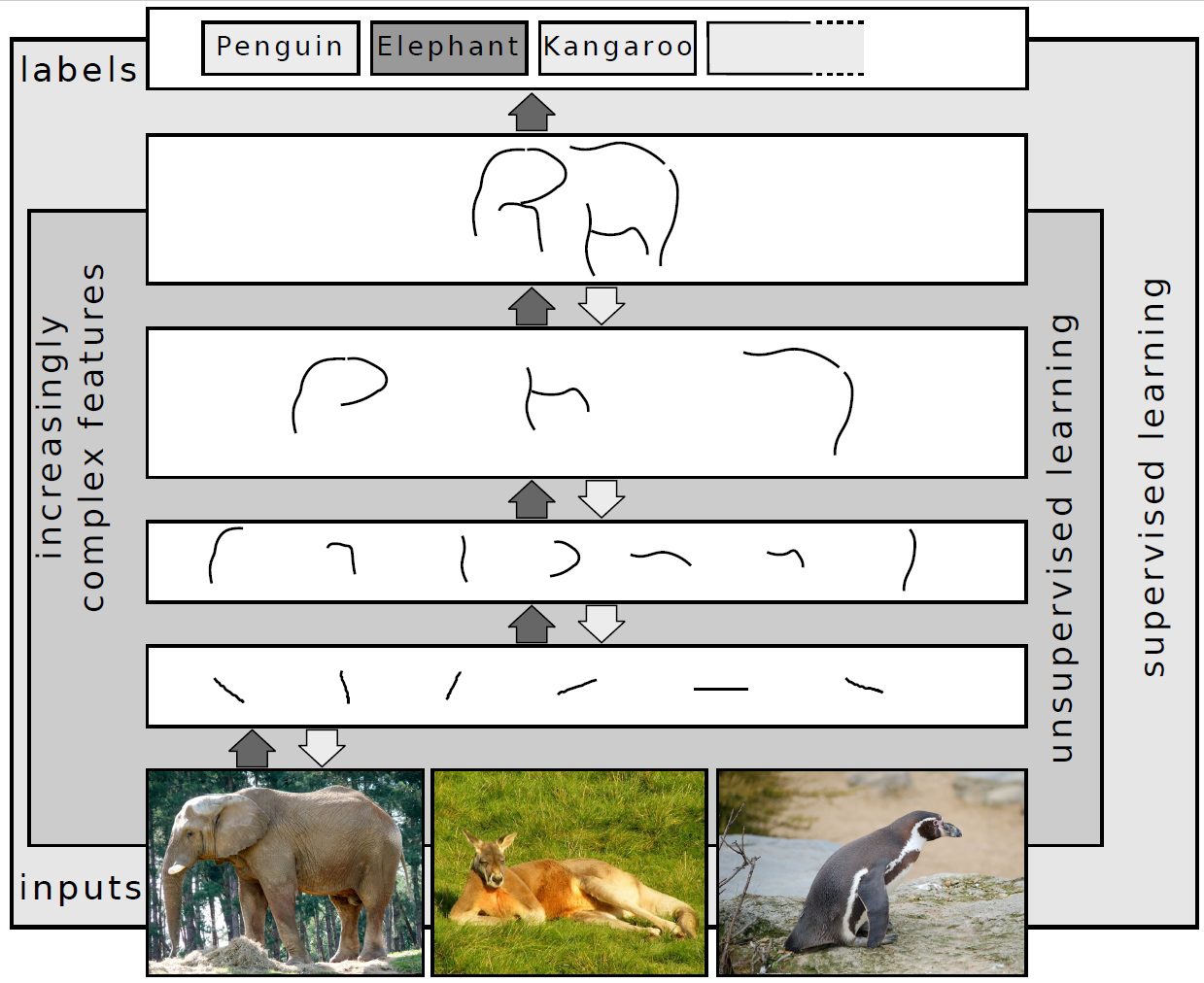

At its heart, deep learning operates by constructing artificial neural networks with numerous interconnected layers. Each layer consists of artificial neurons, which are simple computational units that receive inputs, apply a weighted sum, and pass the result through an activation function to produce an output. The 'deep' aspect refers to having multiple hidden layers between the input and output layers. During training, a process known as backpropagation is used to adjust the weights of these connections based on the error between the network's predictions and the actual target values. This iterative refinement allows the network to learn hierarchical representations of data, where early layers detect simple features (e.g., edges in an image) and deeper layers combine these to recognize more complex patterns (e.g., objects). Key architectures like CNNs excel at spatial data like images, while RNNs and Transformers are adept at sequential data such as text and time series.

📊 Key Facts & Numbers

The scale of deep learning is staggering. Training state-of-the-art models often requires datasets with millions or even billions of data points; for instance, OpenAI's GPT-4 model was reportedly trained on an enormous corpus of text and code. The computational demands are equally immense, with large models requiring thousands of GPUs running for weeks or months, costing millions of dollars in cloud computing fees. The number of parameters in these models has also grown exponentially.

👥 Key People & Organizations

Several pivotal figures have shaped the field of deep learning. Geoffrey Hinton, often dubbed the 'Godfather of Deep Learning', has been instrumental through his work on neural networks and backpropagation. Yann LeCun, a pioneer of CNNs, has also made significant contributions. Yoshua Bengio has focused on deep learning for representation learning and generative models. Beyond these key researchers, Andrew Ng has been crucial in democratizing deep learning education through platforms like Coursera. Major tech giants like Google AI, Meta AI, Microsoft Research, and OpenAI are massive drivers of research and development, employing thousands of researchers and investing billions in AI infrastructure.

🌍 Cultural Impact & Influence

Deep learning techniques have permeated nearly every facet of modern culture and technology. In entertainment, they power recommendation engines on platforms like Netflix and YouTube, personalize content feeds on Facebook, and are increasingly used in generative art and music creation. The film industry employs deep learning for visual effects, deepfakes, and even script analysis. In communication, they underpin the natural language processing capabilities of virtual assistants like Siri and Alexa, and drive the accuracy of machine translation services. The ubiquity of deep learning has also fueled a cultural fascination with AI, leading to widespread discussions about its potential, its risks, and its philosophical implications, as seen in countless books, documentaries, and science fiction narratives, from Westworld to Ex Machina.

⚡ Current State & Latest Developments

The current landscape of deep learning is defined by rapid iteration and the pursuit of ever-larger and more capable models. The development of Large Language Models (LLMs) has dominated headlines, showcasing remarkable abilities in text generation, summarization, and coding. Simultaneously, advancements continue in multimodal learning, where models can process and integrate information from various sources like text, images, and audio, exemplified by models like Google's Gemini. The focus is also shifting towards efficiency and accessibility, with research into smaller, more specialized models and techniques for on-device AI, aiming to reduce the immense computational cost and environmental impact associated with training massive networks.

🤔 Controversies & Debates

Deep learning is not without its controversies and ethical quandaries. A primary concern is the potential for bias embedded within training data, which can lead to discriminatory outcomes in applications like hiring, loan applications, and facial recognition systems, as highlighted by research from organizations like the AI Now Institute. The immense energy consumption of training large models raises significant environmental concerns, contributing to carbon emissions. Furthermore, the development of increasingly sophisticated generative models fuels debates around misinformation, intellectual property rights, and the future of creative professions. The 'black box' nature of many deep learning models, where their decision-making processes are opaque, also poses challenges for accountability and trust, particularly in high-stakes domains like healthcare and autonomous driving. The debate over AGI and the existential risks associated with superintelligence also looms large.

🔮 Future Outlook & Predictions

The future of deep learning points towards more integrated, efficient, and perhaps even more autonomous AI systems. Researchers are actively exploring novel neural network architectures and training methodologies to overcome current limitations, such as the need for massive labeled datasets and susceptibility to adversarial attacks. The pursuit of AGI remains a long-term goal, with some predicting breakthroughs within the next decade, while others remain skeptical. We can expect to see deeper integration of deep learning into scientific discovery, accelerating research in fields like drug design, materials science, and climate modeling. The development of neuro-symbolic AI, which combines deep learning's pattern recognition with symbolic reasoning, is another promising avenue for creating more robust and interpretable AI. The ethical frameworks and regulatory bodies surrounding AI will also continue to evolve, attempting to keep p

Key Facts

- Category

- technology

- Type

- topic